Java consistently occupies the top spot on lists of the most popular programming languages. If you want to become a software developer, proficiency in Java is a good way to get your foot in the door — whether it’s web, mobile or desktop application development. In this article, we’ll take you through the basics. Keep reading for our overview of primitive data types in Java.

Variable Types in Java

Computer programs typically involve data and instructions to manipulate that data. Computers also need a way of accessing data. However, since many kinds of information may reside across a computer’s memory, how do we instruct a computer to access the exact piece of information that we need?

Rather than combing through the entire memory for that relevant memory chunk, we can assign that chunk a name. In this way, the computer associates a part of memory with a name, so next time you use a name to refer to a certain part of memory, the computer knows where to look. This, in a nutshell, is how variables work.

When you create a variable with Java, you’re instructing Java Virtual Machine (JVM) to allocate a segment of your computer’s memory that’s used for storing and retrieving information. However, students of Java know that not all variables are created equally. Variable sizes may vary drastically, as sometimes you might store little data (e.g., a number), whereas other times you might store a lot of it (e.g., millions of numbers). Additionally, variables may also differ in the type of data they store.

Strong Typing in Java

We’ve established that variables may differ in size and the type of information they store. Both of these factors are determined by the variable’s data type.

When writing code in Java, you are required to choose a data type every time you create a variable. This is a characteristic of strongly-typed languages, Java included. Although this might initially sound like an arbitrary constraint, there’s a good reason for enforcing this rule: to stop the program from doing things you do not want it to do. Languages that do not enforce this rule infer data types from context, which can result in unexpected behavior — even though the program might still successfully compile and run.

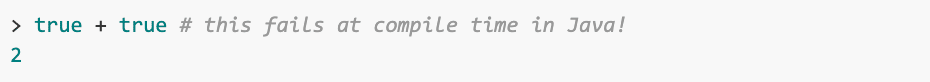

As a result, mistakes such as the one below can happen:

In some other programming languages such as JavaScript, “true” is a boolean data type (a type with two possible values: “true” or 1 and “false” or 0). However, because addition is undefined for boolean types, JavaScript converts them into integers. Once converted, the two integers are added together and the program outputs 2, which might not be the result you expected.

The above code would not compile in Java precisely because the operation of addition (“+”) does not exist for booleans. Since every data type in Java is strictly defined in terms of operations and the kind of data it represents, enforcing every variable to have a type ensures that no mistakes arise from ambiguous data types that need to be converted as in the example above.

Java distinguishes between two main data types: primitive and non-primitive. In the following sections, we’ll primarily go over primitive data types in Java.

Primitive Java Types

Primitive types are Java’s fundamental data types — integers, floating-point numbers, booleans and characters. Primitive types are limited in the type of data and values they represent, but form the basis for creating more complex user-defined data types.

Integers

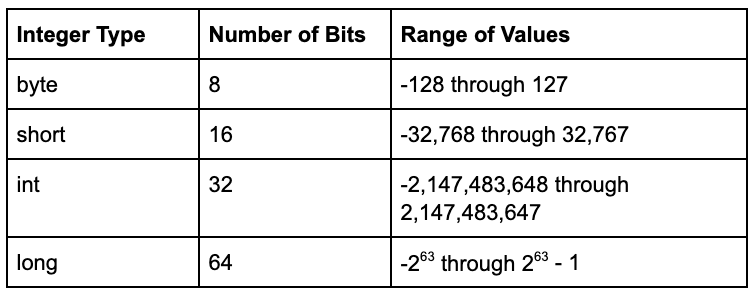

Integers are a data type used for representing whole numbers, such as 20 or -5. Java defines four integer types: byte, short, int and long. These types vary in the number of bits they take up in memory, and thus in the range of values they encompass.

For example, a byte takes up 8 bits, so it encompasses 28 values, which is 256. Half of these are used to represent negative values (down to -128), one for zero, and the rest for positive values (up to 127). The table below illustrates this for all integer types.

Defining a variable’s type is as simple as writing the type’s keyword before the variable’s name:

It’s a good idea to stick to types that capture the range of values you want to store; however, this used to be much more important than it is today. Historically, when memory had been expensive and compute speed slow, it was important to optimize for both. Nowadays, memory is seldom an issue on desktop and server systems. Additionally, since most desktop CPUs today are at least 32-bit, all simpler types are converted to 32 bits for most computations. Therefore, although it might not be intuitive, using smaller types will not improve computation speed, as discussed in this StackOverflow thread.

Of course, using appropriate types remains good practice in scenarios when saving memory matters, such as when working on embedded devices that have limited memory. An array (a collection of multiple elements of a given data type) of bytes will take up significantly less memory than an array of longs of the same length. In an array of one billion data points, the choice between bytes or longs is the difference between the array occupying 1 GB and 8 GB of memory.

Floating-Point Numbers

Floating-point types are used to represent numbers with a fractional component, such as 0.75. There are two floating-point data types in Java: floats and doubles.

These two types correspond to the standard proposed by the Institute of Electrical and Electronics Engineers (IEEE), which defines two types of floating-point representations — one with 32 bits (float) and another with 64 bits (double). The number of bits refers to the data type’s precision: Floats round off values at the 7th digit, whereas doubles have a precision of 15 decimal digits.

The rounding is fundamental to how floating-point numbers are represented in the memory. This is an interesting topic but is beyond the scope of this article, so check out this article if you want to learn more.

Note that Java represents floating-point numbers as doubles by default. If you want to use a float, you need to add the “f” or “F” suffix, like so:

The above suggests that doubles are strongly preferred to floats, especially for applications in which the accuracy of calculations is important. Of course, the same memory considerations apply here as with integers: It makes sense to use floats in an application like computer graphics, where it’s important to optimize for memory and speed, and the precision does not matter as much.

Booleans

A boolean is a data type that can take only two values: true or false. It’s expressed as a single bit that can be either 1 for true or 0 for false.

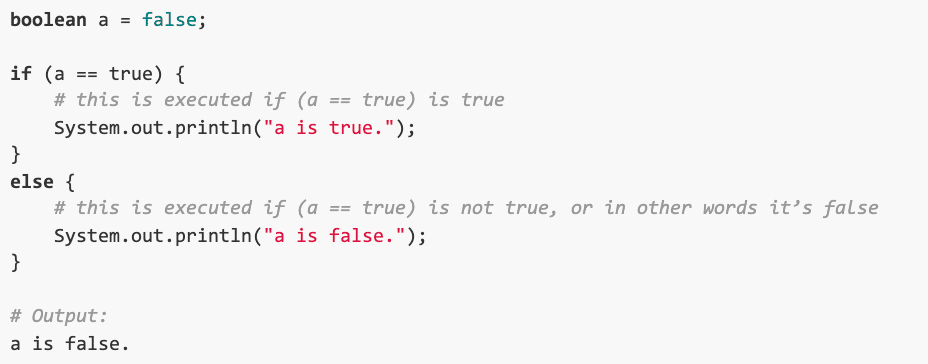

Relational operators such as ”less than” or “greater than” return boolean values. The output of such operations is often used in programming to control the order in which the program’s instructions are executed. In the example below, the execution of the println statement depends on whether the expression (a == true) is evaluated to be true or false.

The equality operator “==” returns “true” if its two operands are equal, and “false” otherwise. Since “a” is not equal to “true,” the expression returns “false” and the program proceeds by printing the second statement.

At the beginning of this article, we showed an example of unintended program behavior in the JavaScript programming language. The unexpected behavior occurred because the code contained addition — an operation that’s undefined for booleans. However, much like integers have operators such as + for addition and * for multiplication, boolean Java types have operators for logical operations. To learn more about boolean types and operators, check out these notes from a Stanford computer science course.

Characters

Char is a Java data type for representing single characters such as “!” or “x. Characters adhere to the Unicode standard, which means that they can represent characters from any language’s script — Latin, Arabic, Cyrillic or any other.

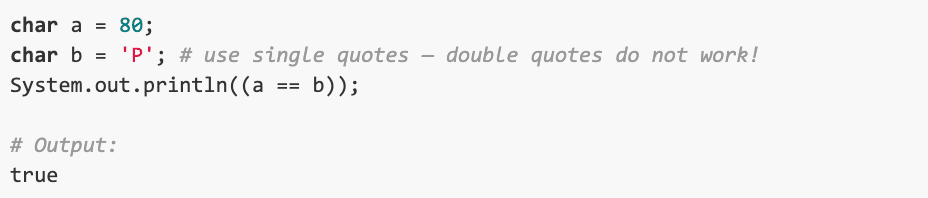

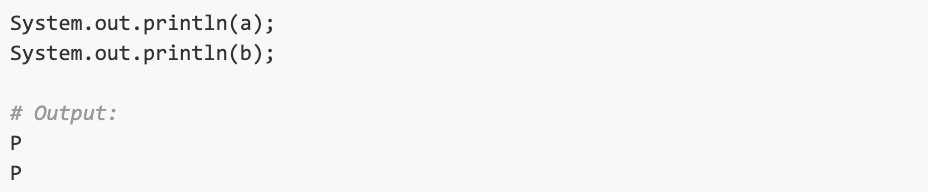

A char takes up 16 bits, meaning that it’s capable of representing 65,536 different characters. Each character represented by Unicode has a numeric counterpart. Because of that, chars can be declared using either a literal character or an integer value. The below example will show you how this works:

We assigned char “a” the integer value of 80 and char “b” the letter ‘P.’ The equality expression returned “true” because these two representations are equal. Assigning integer values to chars automatically converts these values to Unicode’s conversion chart; in this case, 80 corresponds to the capital Latin character ‘P,’ so the two variables are considered equal.

Let’s print chars “a” and “b” to verify that this is indeed the case:

Note how we used single quotation marks when we assigned ‘P’ to char “b.” This is Java’s rule for assigning values to chars; using double quotation marks will not work.

Non-Primitive Java Types

In the sections above, we covered primitive data types. These are Java’s predefined types that are created to be as simple as possible. In contrast, non-primitive data types are not predefined: It’s up to the programmer to define the behavior and methods of a non-primitive data object.

Java defines four main non-primitive data types: strings, classes, arrays and interfaces. These types are not necessarily much more complex than their primitive counterparts. In fact, strings are really just a collection of multiple characters, and arrays are simply collections of elements of the same type.

Become a Java Web Developer

In this article, we taught you the basics of how to initialize and use primitive data types in Java. To become a Java web developer, you’ll have much more to learn, from creating interfaces and subclasses in Java Launch to writing basic queries in SQL. Get started by enrolling in our expert-taught Java Developer Nanodegree program.