Introduction

GPT stands for “Generative Pre-trained Transformer.” It is a type of artificial intelligence model designed to understand and generate human-like text. Developed by OpenAI, GPT models have evolved significantly over the years, starting from GPT-1 in 2018 to the more advanced GPT-4 and beyond.

Each new version has brought improvements in language understanding, reasoning, and contextual awareness. This evolution has made GPT models increasingly capable of tackling more complex tasks, from creative writing to advanced problem-solving.

Note: GPT refers to OpenAI’s family of transformer models. Other leading LLMs include Claude, Gemini, Llama, Mistral, and Cohere Command.

While this article focuses mainly on GPTs, I will also include a tiny example in the Getting Started section that uses Claude’s Model Context Protocol (MCP) because I actively use it in my work and I am genuinely fascinated by how cleanly it lets an agent connect to tools and data.

How GPT Models Work

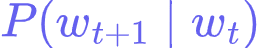

At the core of GPT is the transformer decoder: masked self-attention layers plus feed-forward blocks that learn to predict the next token given a context. Training usually happens in two phases:

1. Pre-training:

- The model learns general language patterns by predicting the next token across a large unlabeled corpus.

2. Adaptation:

- You can adapt the base model to new tasks with fine-tuning, retrieval augmentation, or careful prompting so it follows instructions and uses external knowledge.

To make this concrete, I prepared two tiny, runnable demos that mirror the same next-token idea on a toy dataset.

The scripts are a bit long for this article, so I put them in Codeshare.

Two bite-size runnable examples

1. Counts-based baseline (non-AI):

Builds a word-level bigram table from a single sentence, then predicts the next word and generates a short continuation using greedy decoding.

2. Neural version with PyTorch:

Reuses the same dataset and interfaces, but replaces the counts with a tiny learned model that maps the previous word to a distribution over the next word.

Both demos are intentionally minimal. They do not implement a full transformer, but they illustrate the core loop GPTs use:

- Compute P(next token ∣ context).

- Choose a token.

- Append it to the context.

- Repeat.

How to run them locally?

1. Counts-based script:

- Open the Codeshare link and copy the code into a file named tiny_bigram_counts.py.

- Run: python tiny_bigram_counts.py.

2. PyTorch script:

- pip install torch

- Open the Codeshare link and copy the code into tiny_bigram_torch.py.

- Run: python tiny_bigram_torch.py.

Python 3.9+ is recommended.

What you should see

Both scripts use the same tiny datasets.

"gpt models learn patterns in text and predict the next word"They each run two quick demos:

1. Next-word prediction for the context ‘the’:

- Counts version prints something like:

Context word: 'the'

P('next' | 'the') = 0.265- Although the sentence only contains “the next”, the probability is lower than 1.0 due to the smoothing added by the add_k parameter.

- PyTorch version prints a ranked list with probabilities. After a short training loop, “next” should be the top candidate with a probability close to 1.000 (printed as 1.000 due to rounding).

2. Short continuation starting from ‘gpt’:

Both versions produce max_new_tokens words.

Running the scripts multiple times may generate different sentences due to smoothing. The torch example also generates different sentences but this is due to a slightly different dataset that I use there:

"gpt models learn patterns in text and predict the next word the models are useful"In this dataset, there are two variations next to “models”, which are “learn” and “are”. There are also two variations next to “the”, so there is a 50% chance of either case.

Why this helps you reason about GPTs?

- The counts-based demo shows the shape of the problem:

Estimate,

and decode.

- The neural demo shows how a learned model can replace hand-built probabilities.

- In a real GPT, the context is many previous tokens, the model is a stack of transformer blocks, and training spans billions of tokens. The control flow is the same: compute next-token probabilities, pick a token, and loop.

📌 Popular GPT Versions

- GPT-2

First widely popular version for long-form generation and coherent paragraphs. - GPT-3

Scaled parameters and data, which made few-shot prompting practical for many tasks. - GPT-4

Better instruction following, stronger reasoning, safer outputs, and early multimodal abilities. - GPT-5

Focused on more reliable multi-step reasoning, improved tool use and function calling, stronger instruction adherence, and better efficiency, which lowers cost and latency for production apps.

✅ Applications of GPT Models

Common uses include:

- Text generation for blogs, product copy, and support macros.

- Summarization and extraction from long documents.

- Coding assistance for scaffolding functions, tests, and refactors.

- Retrieval-augmented answers grounded in your own docs and data.

- Agentic workflows that call tools, query APIs, and store state.

As an example of a bit more uncommon use, I am building a data application where an AI agent is the primary interface. These are not the actual functions, but under the hood I may expose a few reliable tools through MCP, like:

- get_filing(ticker, year): fetches a 10-K from EDGAR and returns the MD&A text.

- extract_facts(doc_id, items): parses financial facts and maps them to a normalized schema.

- run_sql(query): queries a warehouse table that tracks point-in-time fundamentals.

The agent sits on top of these tools. A typical request might be: “Compare last quarter’s revenue growth and gross margin for MSFT and AAPL, then summarize risks.” The agent uses MCP to call run_sql and get_filing, cites specific lines from the filings, and returns a short decision note. This gives analysts a natural language interface without giving the model free access to everything. Tool boundaries plus logging make the system debuggable and auditable.

💡 Ethical Considerations

As Uncle Ben famously says in the Spider-Man universe, “With great power comes great responsibility.” GPT models must be used thoughtfully, considering:

- Bias: Models can reflect bias present in pre-training data. Add guardrails and measure outcomes across slices of users.

- Misinformation: Require evidence for claims, ground important answers in your own knowledge base, and log citations.

- Responsible use: Keep a human in the loop for critical decisions, design safe tool permissions, and implement rate limits and anomaly alerts.

Getting Started with GPT APIs

You can get hands-on quickly with two paths: a direct model API and MCP for tool-connected agents.

1) Quick OpenAI example:

The OpenAI Responses API is a good default if you want instruction-following text, code help, or structured outputs.

Here is a minimal Python snippet that generates a short product blurb.

from openai import OpenAI

client = OpenAI()

def generate_blurb(product_name: str) -> str:

"""Create a short marketing blurb for an e-commerce product.

Args:

product_name: The product to describe.

Returns:

A short, catchy description that fits in about 30 words.

"""

resp = client.responses.create(

model="gpt-4o-mini",

input=f"Write a 30-word promo blurb for '{product_name}'. Keep it friendly and clear."

)

return resp.output_textThe Responses API unifies text generation and tool use, and it is the path OpenAI is investing in for agent-style applications.

2. Claude plus MCP for tool-connected agents:

MCP, the Model Context Protocol, lets a client advertise your tools to Claude with names, descriptions, and JSON Schemas. Claude then decides whether to call a tool when asked a question.

Let’s say we have a tiny MCP server that has an API interface. It exposes a single tool that returns the last 7 days of sales for a SKU from a local SQLite database.

Since this article focuses on GPT models, I’m not going to include a Python code for this example, but I’ll provide a general overview just enough for you to know what your API should have.

1. What links your MCP API should have:

- GET /tools/list to advertise available functions with JSON Schemas so the client can discover them.

- POST /tools/call to execute a function by name with typed inputs.

2. What each link should return:

GET /tools/list

Response:

{

"tools": [

{

"name": "query_sales",

"description": "Return last 7 days of sales for a SKU.",

"input_schema": {

"type": "object",

"properties": { "sku": { "type": "string" } },

"required": ["sku"]

}

}

]

}POST /tools/call

Request:

{ "name": "query_sales", "input": { "sku": "ABC-123" } }Response:

{ "content": { "sku": "ABC-123", "units": 74, "revenue": 12150.0 } }These two endpoints are enough for Claude Desktop or any MCP-aware client to discover your tool and call it.

Conclusion

GPT models are strong generalists that can read, write, summarize, and reason with text. Claude models are a compelling alternative with excellent writing and coding skills, and the MCP standard makes both families more useful by giving them controlled access to real tools and data. If you are building internal apps, consider a simple stack: a direct model API for pure text tasks, and an MCP server for anything that needs data or actions. That mix has worked well for my current data application project, where the AI agent is the primary interface and MCP keeps the tool calls safe and observable.

Want to build these skills step by step? Check out these Udacity’s programs: