The Real-World Applications of Image Generation using Latent Diffusion Models, the Architecture Behind Stable Diffusion

What is Stable Diffusion?

Stable Diffusion is a text-to-image model that allows anyone to create beautiful art in seconds. It is a groundbreaking model that can run on consumer GPUs and produces amazing results without needing pre- or post-processing. It can also be used to generate images for educational or entertainment purposes. Stability AI, an AI startup based in London, developed this model in collaboration with AI academic researchers and released the open-source model in 2022.

Image Synthesis

The process of generating images using artificial intelligence is known as image synthesis. This can be done using deep learning techniques, including Variational Autoencoder, Generative Adversarial Network, and Diffusion methods. Image synthesis has several potential business applications. For example, it can create realistic images for product design, marketing, and education. It can also be used to generate images that are consistent with a specific style, such as a painting or a photograph. A Product Designer can use image synthesis to create realistic images of products not yet on the market, helping companies to test their new products and to get feedback from potential customers.

How Does Stable Diffusion Work?

Curious to know how the Stable Diffusion model works to generate high-quality images while remaining computationally efficient? The architecture that powers Stable Diffusion is the Latent Diffusion Model (LDM). LDM works by starting with a random image and then gradually adding details to the image until it reaches the desired output. The model is trained by minimizing the difference between the generated image and a real image. Stable diffusion is a generative model built on top of LDM and uses several techniques to make the latent diffusion model more stable and efficient. These techniques allow Stable Diffusion to generate high-quality images with LDM and are more computationally efficient and easier to train.

Pros and Cons of Image Generation Models

Image generation models have both advantages and disadvantages in the realm of media. On the one hand, they have the potential to enable creative applications and make this technology more accessible through reduced training and inference costs. However, it is also easier to spread manipulated data or misinformation through these models, creating issues such as deep fakes. Another concern is that generative models can reveal sensitive or personal data in their training data, which can be concerning if the data were not collected with explicit consent. Additionally, deep learning modules tend to reproduce or even magnify biases within the data. When using powerful AI tools, it is important to consider the ethics and potential societal implications.

The Future of Stable Diffusion

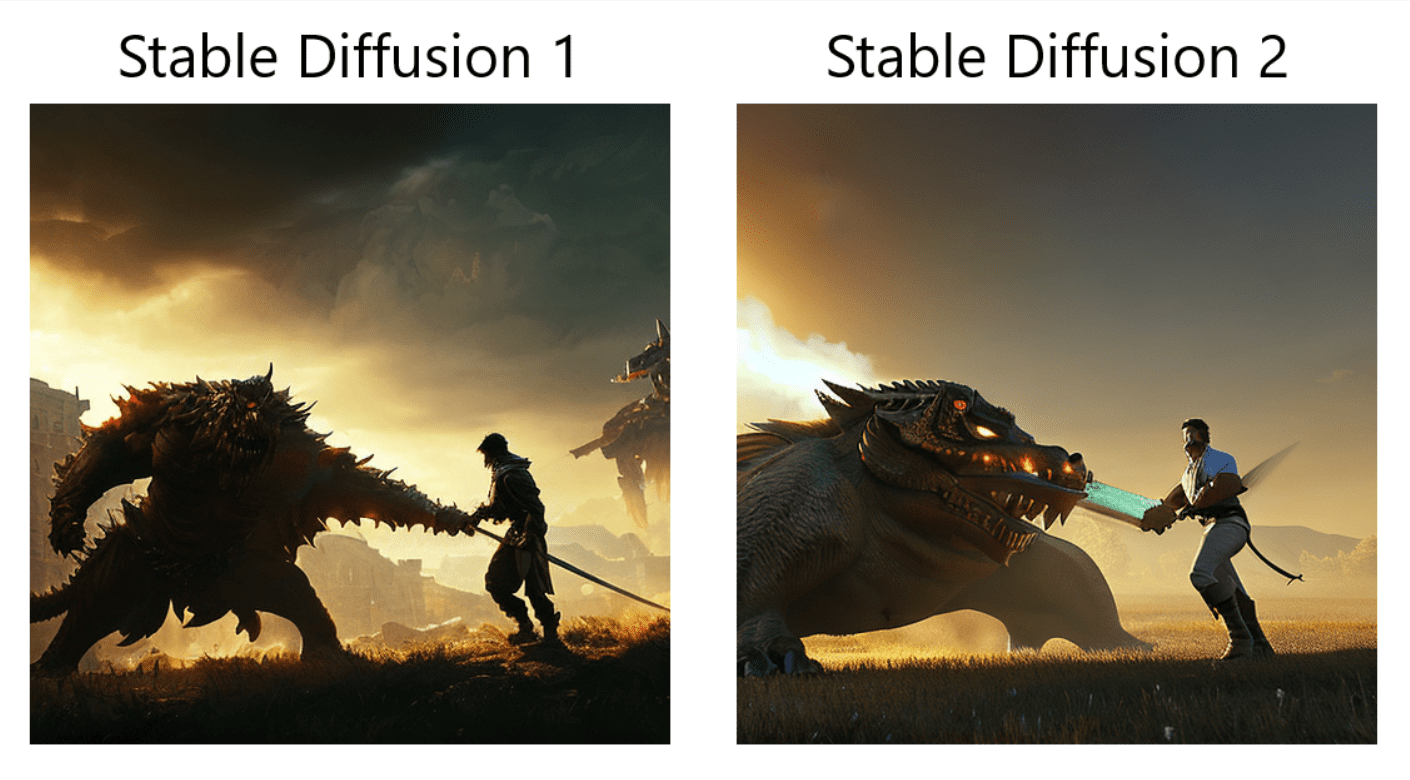

Where are we going from here? Stable AI recently released the Stable Diffusion version 2 with many big improvements and features versus the original V1 release. The key change introduced by Stable Diffusion 2 is the substitution of the text encoder. In Stable Diffusion version 1, OpenAI’s CLIP is utilized, which was trained using some datasets not accessible to the public. Stable Diffusion 2 replaces the text encoder with OpenCLIP, created by LAION with the backing of Stability AI. This enhancement leads to a noticeable improvement in the quality of the produced images. Additionally, the text-to-image models in this update can generate images with default resolutions of 512×512 pixels and 768×768 pixels. As AI researchers and companies continue their research and development in this area, we can expect better technical improvements, new features, and real-world image synthesis applications.

Expand your AI skills

Interested in learning more about AI? Get hands-on AI and machine learning experience with Udacity’s Generative AI courses. Start learning online today!