You’re admiring a sleek autonomous vehicle on the road, when suddenly you see a dog run into the intersection. The pup seems doomed, but the driverless car slows and stops just short of disaster. Given that no quick-thinking human’s hand was on the wheel, how was this accident averted?

Autonomous vehicles are the latest players in the ecosystem of sensor fusion, which combines sensors that track both stationary and moving objects in order to simulate human intelligence. As you might deduce from its name, the discipline fuses together the signals of multiple sensors to determine the position, trajectory, and the speed of an object.

In this article, we explore sensor fusion algorithms, or the algorithms behind the mechanisms that make autonomous vehicles safer than you might think. We’ll talk about why they’re handy and how they work, and we’ll touch on some that power real-world technologies.

What are Sensor Fusion Algorithms?

Sensor fusion algorithms combine sensory data that, when properly synthesized, help reduce uncertainty in machine perception. They take on the task of combining data from multiple sensors — each with unique pros and cons — to determine the most accurate positions of objects. As we’ll see shortly, the accuracy of sensor fusion promotes safety by allowing other systems to respond in a timely and situation-appropriate manner.

Why Sensor Fusion Algorithms are Useful

In real-world systems, noise means that using just one sensor to identify the surrounding environment is not sufficiently reliable, which in the world of sensors translates to errors.

Let’s continue with our car example. Many modern cars have parking sensors that help you avoid hitting other cars when parking in tight spaces. If you’re about to hit something, some cars will also activate the brakes, protecting both you and whatever you’re about to hit. However, it can be dangerous to use information from a single parking sensor to stop the car if you’re too close to an object — what if the sensor receives noisy information? What if the sensor malfunctions?

Now we see why cars with automatic brake activation, such as Teslas, use information obtained from multiple sensors. Sensor fusion sometimes relies on data from several of the same type of sensor (such as a parking sensor), known as competitive configuration. However, combining different types of sensors (such as fusing object proximity data with speedometer data) usually yields a more comprehensive understanding of the object under observation. Let’s take a closer look at this kind of setup, called complementary configuration.

In foggy weather, a radar sensor — great for sensing the velocity and bearing of an object — provides more precision than a LIDAR sensor can. In clear weather, you’ll want to rely more on a LIDAR sensor’s spatial resolution than that of a radar sensor. Sensors have various strengths and weaknesses, and a good algorithm takes multiple types of sensors into consideration. The data from these different sensors can be complementary.

Because different types of sensors have their own pros and cons, a strong algorithm will also give preference to some data points over others. For example, the speed sensor is probably more precise than the parking sensors, so you’ll want to give it more weight. Or perhaps the speed sensor is not very precise, and so you want to rely more on the proximity sensors. The variations are nearly infinite and depend on the specific use case.

How Sensor Fusion Algorithms Work

Sensor fusion algorithms process all inputs and produce output with high accuracy and reliability, even when individual measurements are unreliable.

Let’s take a look at the equations that make these algorithms mathematically sound. A sensor fusion algorithm’s goal is to produce a probabilistically sound estimate of an object’s kinematic state. To calculate this state, an engineer uses two equations and two models: a predict equation that employs a motion model, and an update equation using a measurement model.

So, what are these motion and measurement models? The motion model deals with the dynamics of an object — in our example, a car — across increments of time. It says that the current state of the car draws from a range of values that depend on its state during the last time-step. The measurement model is concerned with the dynamics of the car’s sensors. A range of values that depend on the current state of the car define the current measurement of, say, the radar.

To make sense of these models in the context of sensor fusion, let’s look at two equations: one that predicts the state of the car, and one that continuously updates that prediction. The predict equation uses the previous prediction of the state (the range of possible state values calculated from the last round of predict-update equations) along with the motion model to predict the current state. This prediction is then updated (via the update equation) by combining the sensory input with the measurement model. We end up with a new range of possible state values, which turns into input for the new predict equation — and again we calculate the next measurement to update the prediction.

This process allows us to use sensory input to predict where the car is and where it will be in the next time increment. In turn, this tells us when, and how fast, we need to stop to avoid a collision. For a more thorough explanation, we recommend this article detailing the calculus behind sensor fusion.

The Sensor Fusion Algorithms You Should Know About

To improve your understanding of exactly what a sensor fusion algorithm is, let’s touch on some that are widely used to give you a concrete sense of use cases.

Algorithms Based on the Central Limit Theorem

Perhaps a more user-friendly name for the central limit theorem (CLT) is the law of large numbers: It states that as the sample size of whatever we are measuring increases, the average value of those samples will tend towards a normal distribution (a bell curve). A common example is rolling a die — the more rolls we measure, the closer the average value will be to 3.5, or the “true” average.

How does this relate to sensor fusion? Say we have two sensors, an ultrasonic sensor and an infrared sensor. The more samples we take of their readings, the more closely the distribution of the sample averages will resemble a bell curve and thus approach the set’s true average value. The closer we approach an accurate average value, the less noise will factor into sensor fusion algorithms.

Kalman Filter

A Kalman filter is an algorithm that takes data inputs from multiple sources and estimates unknown values, despite a potentially high level of signal noise. Often used in navigation and control technology, Kalman filters have the advantage of being able to predict unknown values more accurately than individual predictions using single methods of measurement.

Sound familiar? This is exactly what we went over in our car example. Because Kalman-filter algorithms are the most widely used application of sensor fusion and provide the foundation for understanding the concept itself, sensor fusion is often synonymous with Kalman filtering. One of the most common uses for Kalman filters is in navigation and positioning technology. Because Kalman filtering is recursive, we only need to know the car’s last known position and speed to be able to predict its current and future state.

Algorithms Based on Bayesian Networks

Bayes’ rule, which deals with probability, is the basis of the update equation described earlier that combines the motion and measurement models. Bayesian networks, also based on Bayes’ rule, predict the likelihood that any one of several hypotheses is the contributing factor in a given event. K2, hill climbing, iterative hill climbing, and simulated annealing are some well-known Bayesian algorithms.

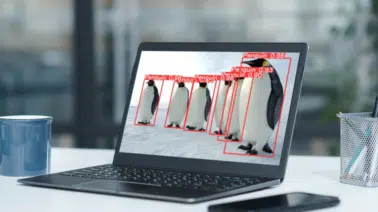

Convolutional Neural Networks

Convolutional neural-network-based methods can simultaneously process many channels of sensor data. From this fusion of such data, they produce classification results based on image recognition. For example, a robot that uses sensory data to tell faces or traffic signs apart relies on convolutional neural-network-based algorithms.

Summary

In this article, we talked about sensor fusion algorithms, why they’re useful, and what a few well-known ones look like. We also touched on the equations behind these algorithms, which can be fairly complicated.

If you’re interested in sensor fusion algorithms, consider taking a professional course with the Sensor Fusion Algorithm Engineer Nanodegree Program.